This article is going to explain about the Netapp write operations. In Clustered Data ONTAP , write request might land on any of the cluster node irrespective of the storage owners. So that data write operations will be either direct access or indirect access. The Write requests will not send to the disks immediately until CP ( consistency point) occurs . NVRAM is another component of Netapp which keeps the redo logs. NVRAM provides a safety net for the time between the acknowledgement of a client-write request and the commitment of the data to disk.

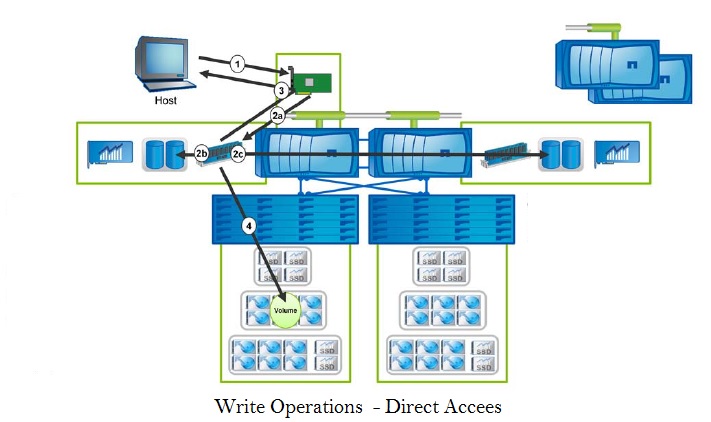

WRITE Operation (Direct Data Access):

Write operations for direct access take the following path through the storage system:

- The write request is sent to the storage system from the host via a NIC or an HBA.

- The write is simultaneously processed into system memory and logged in NVRAM and in the NVRAM mirror the partner node of the HA pair.

- The write is acknowledged to the host.

- The write is sent to storage in a consistency point (CP).

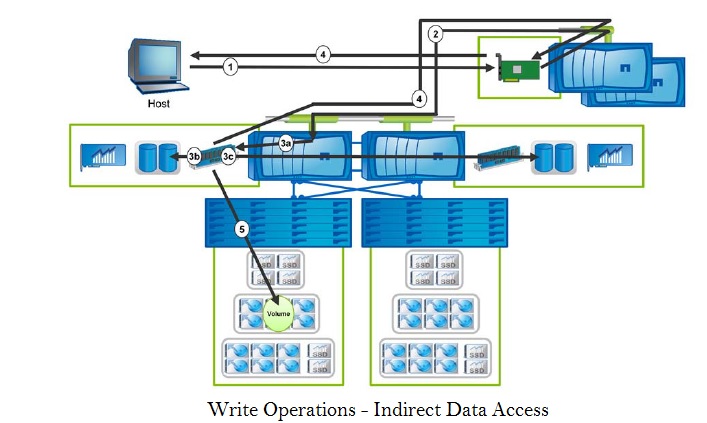

WRITE OPERATIONS (INDIRECT DATA ACCESS)

Write operations for indirect data access take the following path through the storage system:

- The write request is sent to the storage system from the host via a NIC or an HBA.

- The write is processed and redirected (via the cluster-interconnect) to the storage controller that owns the volume.

- The write is simultaneously processed into system memory and logged in NVRAM and in the NVRAM mirror of the partner node of the HA pair.

- The write is acknowledged to the host.

- The write is sent to storage in a CP.

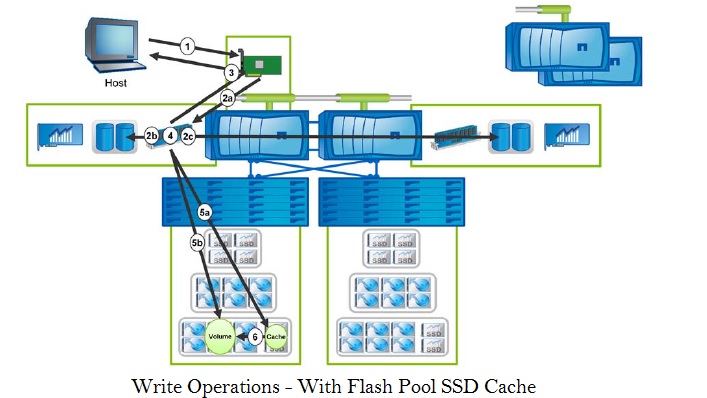

WRITE OPERATIONS (FLASH POOL SSD CACHE):

Write operations that involve the SSD cache take the following path through the storage system:

- The write request is sent to the storage system from the host via a NIC or an HBA.

- The write is simultaneously processed into system memory and logged in NVRAM and in the NVRAM mirror of the partner node of the HA pair.

- The write is acknowledged to the host.

- The system determines whether the random write is a random overwrite.

- A random overwrite is sent to the SSD cache; a random write is sent to the HDD.

- Writes that are sent to the SSD cache are eventually evicted from the cache to the disks, as determined by the

eviction process).

Consistency Point (CP):

Consistency point will occurs on following scenarios:

- The write requests will send to the memory (which act as write cache) and once the NVRAM buffers fills up , it will flush the write to the disk.

- A Ten second timer runs out.

- A Resource is exhausted or hits a predefined scenario, and it is time to flush the writes to disk.

What will happen if the back to back CP happens ?

- As an NVRAM first buffer reaches its capacity , it signals to memory to flush the writes to the disk.

- If the second buffer reaches capacity while writes are still being sent to disk from first buffer , the CP can’t occur . The CP can occur only after the first flush of writes is complete.

NVRAM :

- The write requests will sent to disk from memory (Not from NVRAM) in a CP . So NVRAM is not a write buffer.

- It is battery backed Memory to keep the redo logs in-case of system power failure or crash.

- Double-buffered journal of write operations

- It is mirrored between storage controller in a HA Pair.

- Writes in system memory that are logged in NVRAM. It mirrored and persistent.

- It is used only for writes not for reads

- It stores redo log or short term translation logs which is typically less than 20 seconds.

- It is used only on system crash or power failure. Otherwise , it will not looked at again.

- It enables rapid acknowledgement of client-write requests.

- It is very fast and will not cause any performance issues.

Hope this article is informative to you . Share it ! Comment !! Be Sociable !!!

Leave a Reply